The latest research from OpenAI put its machine learning agents in a simple game of hide-and-seek, where they pursued an arms race of ingenuity, using objects in unexpected ways to achieve their goal of seeing or being seen. This type of self-taught AI could prove useful in the real world as well.

The study intended to, and successfully did look into the possibility of machine learning agents learning sophisticated, real-world-relevant techniques without any interference of suggestions from the researchers.

Tasks like identifying objects in photos or inventing plausible human faces are difficult and useful, but they don’t really reflect actions one might take in a real world. They’re highly intellectual, you might say, and as a consequence can be brought to a high level of effectiveness without ever leaving the computer.

Whereas attempting to train an AI to use a robotic arm to grip a cup and put it in a saucer is far more difficult than one might imagine (and has only been accomplished under very specific circumstances); the complexity of the real, physical world make purely intellectual, computer-bound learning of the tasks pretty much impossible.

At the same time, there are in-between tasks that do not necessarily reflect the real world completely, but still can be relevant to it. A simple one might be how to change a robot’s facing when presented with multiple relevant objects or people. You don’t need a thousand physical trials to know it should rotate itself or the camera so it can see both, or switch between them, or whatever.

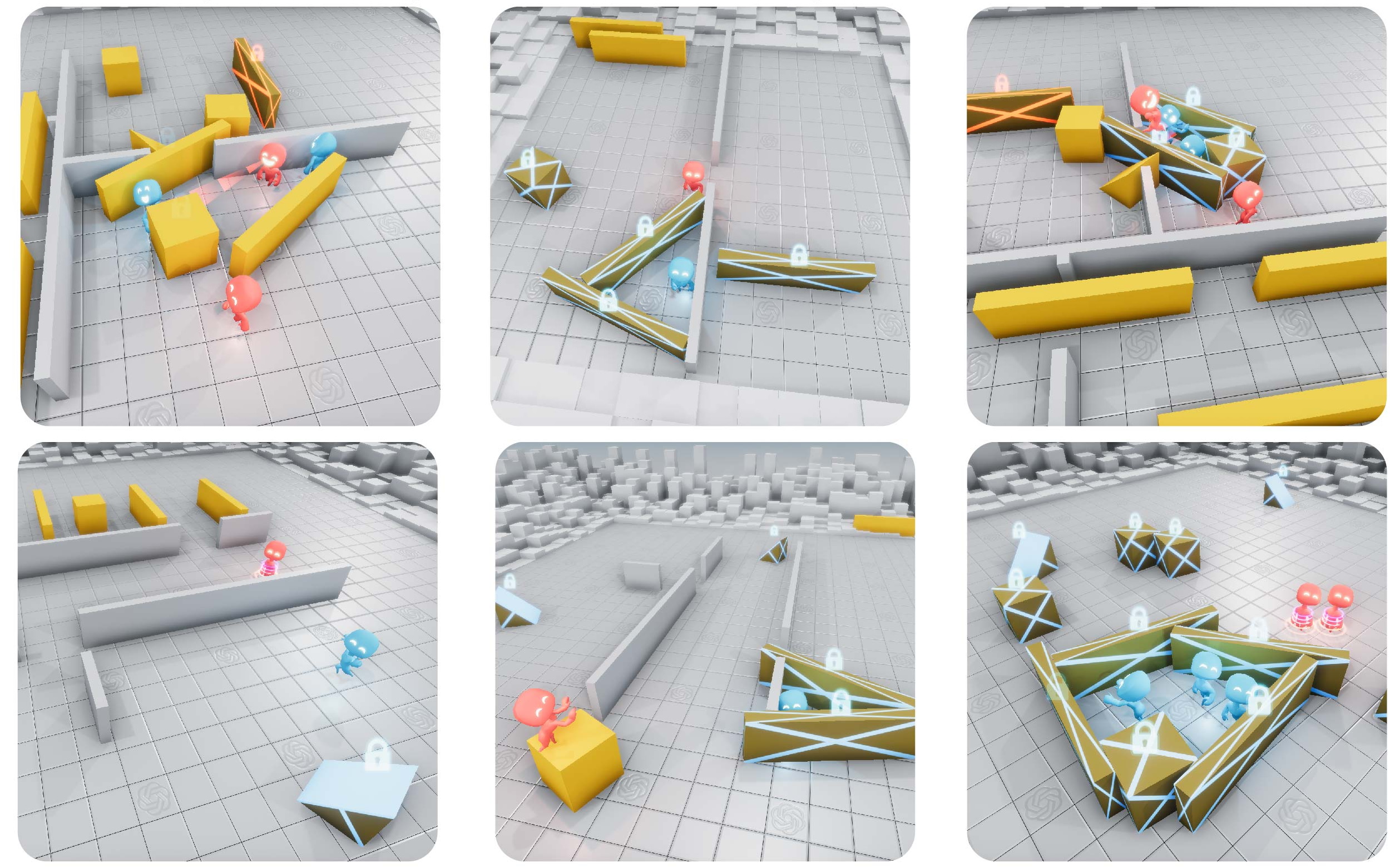

OpenAI’s hide-and-seek challenge to its baby ML agents was along these lines: A game environment with simple rules (called Polyworld) that nevertheless uses real-world-adjacent physics and inputs. If the AIs can teach themselves to navigate this simplified reality, perhaps they can transfer those skills, with some modification, to full-blown reality.

Such is the thinking behind the experiment, anyway, but it’s entertaining enough on its own. The game pits two teams against one another in a small 3D arena populated with a few randomly generated walls and objects. Several agents are spawned in it and the “hiders” are given a few seconds to familiarize themselves with the environment and hide. They can also interact with the objects, pushing them around and locking them in place. Once the “seeker” looks up they have a certain amount of time to spot the hiders.

All the machine learning program was informed of were the basic senses — each agent has a view of the world and a sort of “lidar” to tell them the positions of nearby objects — and the knowledge that they can move objects around. But beyond that they were simply given a point when they succeeded at their job, either seeking or hiding — that’s their motivation.

From these basic beginnings came wonderfully interesting results. At first the teams essentially moved randomly. But over millions of games the hiders found that by moving in a certain way — “crudely” running away — they could increase their points more often. And thus the arms race began.

Since the hiders were running away, the seekers began to develop more structured pursuit behaviors. But then came the tools.

Hiders found that they could push and pull objects on the field, and began constructing forts out of them.

The seekers responded by using ramps found on the field to ascend over the objects and crash the forts.

Hiders began looking for ramps while the seekers were still counting and either stealing them or locking them in place (the lock can’t be undone by an agent of the opposite team). Interestingly this involved a certain amount of division of labor, because there often wasn’t enough time for both hiders to go out in search of ramps; one had to prepare the fort while the other went on ramp patrol.

The OpenAI team thought maybe that was the end of it, but the seekers learned to exploit an unintended feature of the game world. They discovered that they could “push” a box while riding on top of it, meaning they could put it near a ramp, climb onto it, then “surf” it all over the field and find those naughty hiders.

Of course the hiders responded by locking every object they weren’t using to construct their fort — and that seems to be the end of the line as far as strategy in this game.

So what’s the point? As the authors of the paper explain, this is kind of the way we came bout.

The vast amount of complexity and diversity on Earth evolved due to co-evolution and competition between organisms, directed by natural selection. When a new successful strategy or mutation emerges, it changes the implicit task distribution neighboring agents need to solve and creates a new pressure for adaptation. These evolutionary arms races create implicit autocurricula whereby competing agents continually create new tasks for each other.

Inducing autocurricula in physically grounded and open-ended environments could eventually enable agents to acquire an unbounded number of human-relevant skills.

In other words, having AI models compete in an unsupervised manner may be a far better way to develop useful and robust skills than letting them toddle around on their own, racking up an abstract number like percentage of environment explored or the like.

Increasingly it is difficult or even impossible for humans to direct every aspect of an AI’s abilities by parameterizing it and controlling the interactions it has with the environment. For complex tasks like a robot navigating a crowded environment, there are so many factors that having humans design behaviors may never produce the kind of sophistication that’s necessary for these agents to take their place in everyday life.

But they can teach each other, as we’ve seen here and in GANs, where a pair of dueling AIs work to defeat the other in the creation or detection of realistic media. The OpenAI researchers posit that “multi-agent autocurricula,” or self-teaching agents, are the way forward in many circumstances where other methods are too slow or structured. They conclude:

“These results inspire confidence that in a more open-ended and diverse environment, multi-agent dynamics could lead to extremely complex and human-relevant behavior.”

Some parts of the research have been released as open source. You can read the full paper describing the experiment here.